Nvidia Unveils New B200 GPU

- By Paul Mah

- March 19, 2024

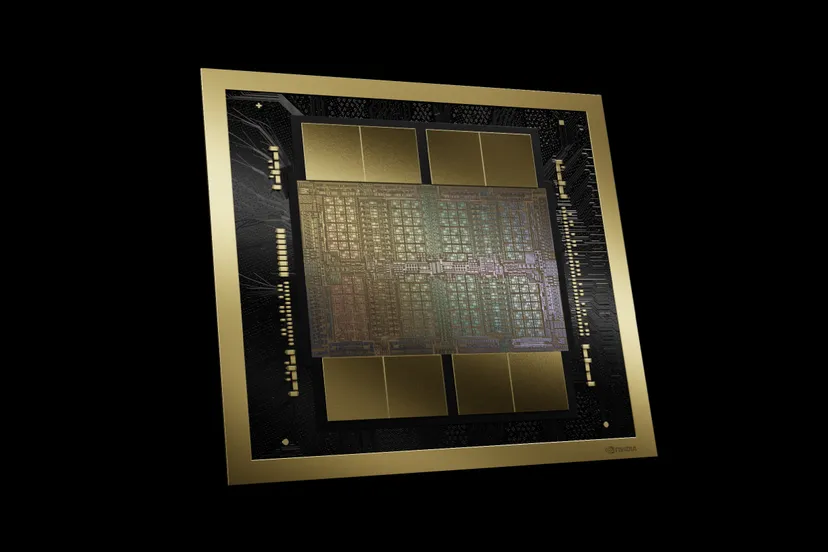

Nvidia earlier today announced its Blackwell platform designed to dramatically ramp up the computing capabilities needed to support AI. At the heart of the new Blackwell architecture are two new GPUs: The B100 and B200.

Nvidia earlier today announced its Blackwell platform designed to dramatically ramp up the computing capabilities needed to support AI. At the heart of the new Blackwell architecture are two new GPUs: The B100 and B200.

The more powerful of the two, the B200 is made of two separate dies connected by a 10 TB/second chip-to-chip link for a GPU with 208 billion transistors – significantly more than the 80 billion transistors of the H100 GPU.

Blackwell is named in honor of David Harold Blackwell – a mathematician who specialized in game theory and statistics. It succeeds the Nvidia Hopper architecture launched two years ago.

New GPU family

Nvidia says the HGX B200 achieves up to 15x higher inference performance over the previous systems powered by Nvidia’s Hopper generation GPUs when it comes to massive AI models. Under the hood, each die is being paired with 4 stacks of HBM3E memory 192GB of HBM3E RAM per B200 GPU.

As reported by The Verge, training a 1.8 trillion parameter model would have previously taken 8,000 Hopper GPUs and 15 megawatts of power. Today, 2,000 Blackwell GPUs can do it while consuming just four megawatts.

On a GPT-3 LLM benchmark with 175 billion parameters, Nvidia says the GB200 offers seven times the performance of an H100 or four times the training speed.

Upgraded ecosystem

To ensure speedy connectivity for training the most complex AI models, Nvidia also unveiled the fifth-generation NVLink, which delivers groundbreaking 1.8TB/s bidirectional throughput per GPU for seamless high-speed communication of up to 576 GPUs.

For larger uses that require supercomputer-level computing, Nvidia took the wraps off the new Nvidia GB200 NVL72, which it says is up to the task of training trillion-parameter models.

The GB200 NVL72 is a custom rack solution featuring multi-node, liquid-cooled Nvidia GPU servers. It is available in configurations starting from 72 Blackwell GPUs and 36 Grace CPUs and acts as a single system offering 1.4 exaflops of AI performance and 30TB of fast memory.

Notably, Nvidia says applications will have coherent access to a unified memory space. This simplifies programming and supports the larger memory needs of trillion-parameter LLMs, transformer models for multimodal tasks, models for large-scale simulations, and generative models for 3D data.

“For three decades we’ve pursued accelerated computing, with the goal of enabling transformative breakthroughs like deep learning and AI,” said Jensen Huang, founder and CEO of Nvidia.

“Generative AI is the defining technology of our time. Blackwell is the engine to power this new industrial revolution. Working with the most dynamic companies in the world, we will realize the promise of AI for every industry.”

Blackwell-based products will be available from partners starting later this year. For now, Amazon, Google, Meta, Microsoft, Oracle, and OpenAI are among the first firms that have confirmed that they will deploy Blackwell GPUs.

Image credit: Nvidia

Paul Mah

Paul Mah is the editor of DSAITrends, where he report on the latest developments in data science and AI. A former system administrator, programmer, and IT lecturer, he enjoys writing both code and prose.